Every academic year, thousands of neurodivergent students enter higher education with the capability to succeed, and thousands leave without completing their degrees. An investigation by the North East Autism Society (2025), using data from the Higher Education Statistics Agency, found that autistic student dropout rates stood at 31% in 2017, 2018, and 2019 and rose to 37% when lockdown began in 2020. Autistic students remain the most likely disability group to leave university before completing their degree. The proportion achieving a first or upper second classification remains lower than that of any other disability group, and their progression into graduate-level employment is the lowest of all groups (Office for Students).

These are not statistics about academic ability. They are statistics about system design. And the systems most responsible for these outcomes are the ones closest to the student experience: assessment, feedback, and adjustment pathways.

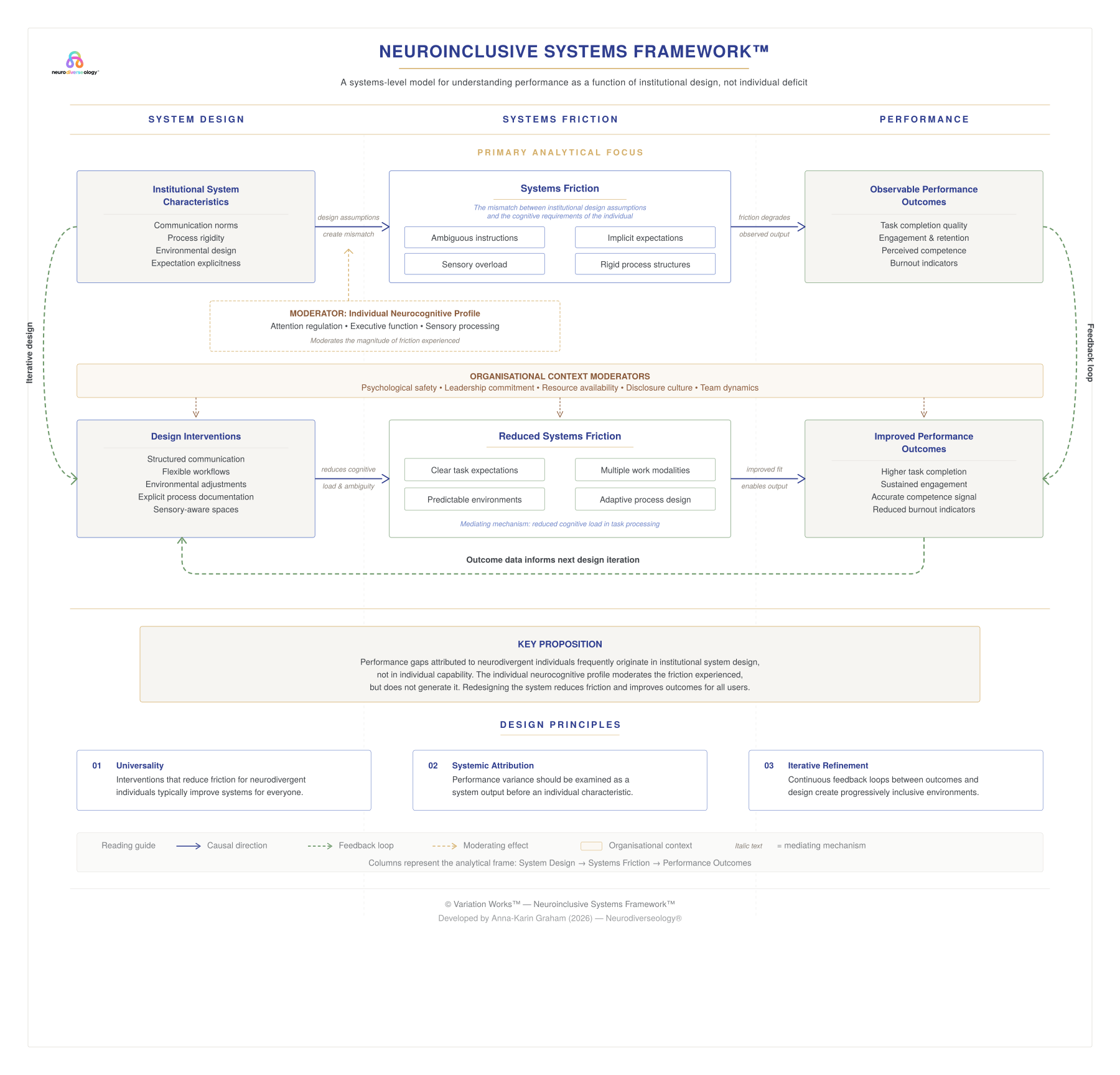

This article applies the concept of systems friction, introduced in the Neuroinclusive Systems Framework™, directly to higher education assessment and feedback practices. It identifies where friction typically accumulates, what the research says about the cognitive cost, and how institutions can begin diagnosing and addressing it using the three domains of the Neuroinclusive Systems Index™ Rapid Diagnostic Audit.

Figure 1. The Neuroinclusive Systems Framework™ illustrates how institutional system design creates cognitive demand, how mismatch produces systems friction, and how friction governs observable performance outcomes. Developed by Anna-Karin Graham (2026). Neurodiverseology®.

Why Neuroinclusive Assessment Design Is a Systems Problem

Assessment is the mechanism through which higher education institutions communicate expectations, evaluate capability, and generate performance signals. It determines whether a student understands what is being asked, can demonstrate what they know, and receives feedback that enables improvement. When any part of this mechanism is unclear, inconsistent, or structurally inaccessible, it generates systems friction: avoidable cognitive, procedural, and structural barriers that consume mental resources before the student even begins the task.

For neurodivergent students, assessment friction does not just reduce performance. It compounds. A single ambiguous brief generates clarification-seeking behaviour that increases anxiety. Anxiety reduces executive function capacity. Reduced executive function makes it harder to initiate work, manage time, and sustain attention. And when the feedback loop that follows is itself unclear or delayed, the student loses the information needed to calibrate their next attempt. The system, not the student, is producing the failure cascade.

The Hidden Curriculum in Assessment Design

Higher education assessment has always carried a hidden curriculum: a set of unstated expectations about what students should already know before they begin an assessment task. These include assumptions about how to interpret marking criteria, how to identify what a “good” submission looks like without an exemplar, and how to infer the relative importance of different assessment components from minimal cues.

For neurotypical students, navigating this hidden curriculum typically involves drawing on social learning, peer comparison, and implicit pattern recognition from prior educational experiences. For neurodivergent students, particularly those with autism, ADHD, or dyslexia, these informal decoding mechanisms are often less available or more effortful. The result is a cognitive demand mismatch: the assessment is not testing what it claims to test. It is testing the student’s ability to decode the system around the assessment.

Hamilton and Petty (2023) reinforced this point in their conceptual analysis of compassionate pedagogy for neurodiversity in higher education, arguing that minimising ambiguity at all levels, from materials to expectations to communication, is essential for reducing hidden curriculum barriers. They recommended that expectations be made fully explicit, that exemplars be used wherever appropriate, and that educators continuously reflect on the accessibility of their own communication.

The Three Domains of Neuroinclusive Assessment Friction

The Neuroinclusive Systems Index™ Rapid Diagnostic Audit, developed under the Neuroinclusive Systems Framework™, identifies three operational domains where assessment-related friction concentrates in higher education. Each domain corresponds to a specific layer of the assessment lifecycle, from the moment a student receives a brief to the moment they receive feedback and determine their next steps.

Domain A: Brief Clarity and Expectation Transparency

The first and highest-impact friction point in any neuroinclusive assessment system is the brief itself. When task briefs are incomplete, when they omit the purpose of the task, the expected output format, the evaluation criteria, the weighting, or the deliverable method, students are forced to interpret what is missing. This interpretive labour is a form of extraneous cognitive load, and research consistently shows that neurodivergent students bear a disproportionate share of it.

Le Cunff et al. (2024a) found that neurodivergent students in higher education reported significantly higher extraneous cognitive load than neurotypical peers, and that this difference was driven by how information was presented, not by the inherent difficulty of the material. Their qualitative research identified that a lack of clarity about which parts of a curriculum were mandatory, where to start with reading lists, and how to prioritise tasks created additional barriers for neurodivergent students that neurotypical students did not report.

The practical implications are direct. A neuroinclusive brief should state, on a single page: the purpose of the task, the specific deliverable, the output format, the weighting of evaluation criteria, the word count or scope parameters, and the submission method. If a student needs to open multiple documents, cross-reference module handbooks, or email a tutor to understand what is expected, the brief is generating friction.

In the Neuroinclusive Systems Index™ Rapid Diagnostic Audit, brief clarity is assessed through four indicators: whether briefs are scannable and complete, whether success criteria are explicit and plain-language, whether annotated exemplars are provided, and whether key milestones are published with specific dates. Institutions that score below 2.5 on this domain are operating in a high-friction environment, and brief clarity should be the first intervention target.

Domain B: Cognitive Load and Accessibility

The second domain addresses the structural accessibility of assessment materials and processes. This goes beyond format flexibility, though that matters too, and into the architecture of how information is delivered to students before, during, and around the assessment.

Cognitive load accumulates when instructions are delivered in dense, unstructured paragraphs. It increases when materials are released late, scattered across multiple platforms, or updated without notification. It spikes when students are expected to manage multiple deadlines with no visible timeline. And it compounds when the process for requesting reasonable adjustments is itself unclear, requiring neurodivergent students to navigate a secondary bureaucratic system just to access the primary one.

Clouder et al. (2020), in their narrative synthesis of neurodiversity in higher education, identified that assessment methods frequently penalise neurodivergent students and that academic workload structures are often inflexible, increasing the risk of attrition and failure. They noted that while universal design for learning (UDL) principles are referenced in policy, their implementation remains inconsistent, and students frequently have difficulty obtaining appropriate adjustments.

McDowall and Kiseleva (2024) reached similar findings in their rapid review of supports for neurodivergent students in higher education. Their synthesis identified that the evidence base focuses heavily on individual skills and learning strategies rather than on institutional and structural outcomes, such as completion rates, progression, and system-level accessibility. The implication is clear: higher education has invested in supporting individual students within flawed systems rather than redesigning the systems themselves.

In the Audit, cognitive load and accessibility are assessed through four indicators: whether instructions are chunked into headings, bullets, and sequential steps; whether materials are released in advance and centralised; whether format flexibility exists with equivalent evaluation criteria; and whether reasonable adjustments are predictable through a published menu rather than a reactive, case-by-case process.

Domain C: Feedback System Reliability

The third domain, and arguably the one most overlooked in neuroinclusion discussions, is feedback. Assessment does not end when a student submits their work. The feedback they receive determines whether they can calibrate future performance, build confidence in their understanding of expectations, and maintain engagement with the system over time.

When feedback is delayed beyond a stated timeframe, inconsistent between markers, or unstructured in its format, it removes the predictability that neurodivergent students need to learn from the assessment process. If one marker provides detailed, criterion-referenced commentary and another provides a single paragraph of general impressions, the student has no reliable signal to work with. The friction is not in the feedback content. It is in the variance.

Research on neurodivergent student experiences in higher education has consistently identified feedback as a critical pain point. Students report difficulty understanding what markers are asking them to improve, confusion when feedback does not align with the brief’s stated criteria, and frustration when escalation pathways are unclear or unavailable (Clouder et al., 2020; McDowall & Kiseleva, 2024). For neurodivergent students navigating executive function differences, inconsistent feedback creates a particular burden: without a reliable pattern to detect, each new assessment feels like a fresh interpretive challenge rather than a step in a learning sequence.

In the Audit, feedback system reliability is assessed through four indicators: whether feedback is timely and consistent; whether it is structured as keep / stop / start with at least one specific next step; whether staff interpretation is standardised through shared exemplars and moderation; and whether escalation pathways are clear for any individual navigating the system who finds instructions or feedback unclear.

What the Dropout Data Is Actually Telling Us

When we look at neurodivergent dropout statistics through a systems friction lens, the narrative changes. The question is not “why can’t these students cope?” It is “what in our assessment, feedback, and adjustment systems is generating avoidable friction that pushes capable students out?”

The data supports this reframing. Autistic students in the UK have the highest dropout rates of any disability group, and their attainment of upper classifications remains the lowest (North East Autism Society, 2025; Office for Students). In Australia, a 2024 ecological systems study published in Higher Education involving in-depth interviews with neurodivergent HE students found that participants reported struggling with tests due to recall and focus, with group assessments due to executive functioning demands, and with oral presentations due to punitive requirements around body language and eye contact. Students described general difficulty understanding what assessments were asking of them, a direct indicator of brief clarity failure.

The British Psychological Society (Farrant et al., 2022) has argued that higher education has historically maintained multiple barriers to access for neurodivergent students. Their 15 recommendations for practice included a review of curriculum design and assessment strategies, revision of language to ensure inclusivity, and training for all staff. Yet the persistence of dropout differentials suggests that these recommendations have not yet translated into structural system change at the level of assessment design.

Neurodivergent students are more likely to drop out not because they cannot do the work, but because the systems surrounding the work, the briefs, the feedback loops, the adjustment processes, generate friction that erodes capacity over time. McDowall and Kiseleva (2024) stated directly that neurodivergent students are more likely to leave higher education, and that institutional environments, structures, and processes may not adequately address their needs. The evidence points toward system-level redesign as the appropriate response.

For a broader examination of why systems friction, not individual deficit, drives neuroinclusive performance gaps across both education and employment, see Article 1 in this series.

From Diagnosis to Redesign: Practical Steps for Institutions

The transition from recognising assessment friction to reducing it does not require a complete overhaul of institutional systems. It requires a targeted, evidence-informed approach that begins with the highest-friction points and produces measurable improvements.

The Neuroinclusive Assessment & Feedback Systems Framework™, developed as the flagship applied model within the Neuroinclusive Systems Framework™, operationalises this through a Diagnose → Design → Deploy → Demonstrate approach. Each stage maps directly to the three audit domains.

Start With an Assessment Friction Audit

Before redesigning anything, institutions need to know where friction is actually occurring. The Neuroinclusive Systems Index™ – Rapid Diagnostic Audit provides a structured tool for this purpose: a 12-item, Likert-scale assessment that produces a mean friction score across brief clarity, cognitive load, and feedback reliability.

Programme teams, disability services, or quality assurance leads can use the Audit to establish a baseline. Scores between 1.0 and 2.4 indicate high systems friction and signal that structural barriers are likely contributing to student disengagement, clarification overload, and attrition. Scores between 2.5 and 3.9 indicate moderate friction, mixed clarity that requires targeted improvement, starting with the lowest-scoring items. Scores between 4.0 and 5.0 indicate low friction, where the focus shifts to maintaining consistency and introducing lightweight monitoring.

Target the Highest-Impact Interventions First

Not all assessment friction carries equal weight. Research and practice consistently identify two areas that produce the greatest return when addressed:

- Brief clarity (items 1–2 in the Audit). Making task briefs complete, scannable, and explicit is the single highest-leverage intervention available. Every hour invested in improving brief design saves multiples in reduced clarification requests, reduced student anxiety, and reduced marking disputes downstream.

- Feedback reliability (items 9–10 in the Audit). Moving from unstructured feedback to a consistent keep / stop / start format with at least one actionable next step transforms feedback from an interpretive puzzle into a usable performance signal. This reduces the cognitive labour of decoding what markers mean and increases the likelihood that students will act on the feedback they receive.

These two interventions address the entry and exit points of the assessment lifecycle. When both are functioning, the system becomes more predictable, and predictability is one of the most powerful neuroinclusive design principles available.

Measure Friction Reduction, Not Just Satisfaction

Institutions frequently measure assessment quality through student satisfaction surveys. While useful, satisfaction data does not directly measure whether systems friction has been reduced. Neuroinclusive assessment monitoring requires operationally embedded metrics: the volume of clarification requests received before submission deadlines, the number of feedback-related escalations or disputes, the rate at which marks are moderated or adjusted, and the consistency of scoring across different assessors.

These indicators are already generated by most institutional systems. They just are not being tracked as friction signals. Reframing them that way, and reporting them alongside satisfaction data, gives leadership teams a clearer picture of where assessment systems are working and where they are generating avoidable cost.

For organisations seeking to apply similar diagnostic approaches to workplace systems including recruitment, onboarding, and performance review, see Article 3 in this series.

The Universality Principle in Assessment Design

One of the most consistent findings across the neuroinclusive assessment literature is that interventions designed to reduce friction for neurodivergent students improve assessment quality for all students. Clearer briefs help every student. Structured feedback supports every learner. Predictable adjustment pathways reduce anxiety across the student body.

This is the universality principle in action. Neurodivergent students encounter assessment friction first and most acutely because of differences in how they process ambiguity, manage working memory, and navigate implicit expectations. But the underlying design flaws, incomplete briefs, inconsistent feedback, unclear escalation routes, affect everyone. Fixing them is not a special accommodation. It is an improvement in assessment quality that produces better outcomes at every level.

Hamilton and Petty (2023) articulated this clearly: designing learning environments that minimise ambiguity, utilise exemplars, and make expectations fully explicit does not just support neurodivergent students. It creates conditions in which all students can engage more effectively. The neuroinclusive lens does not narrow the focus. It sharpens it.

Discovery Call

Identify where assessment systems are generating avoidable friction for neurodivergent students.

Book a structured Discovery Call to diagnose high-friction points in briefs, feedback, and adjustment pathways, and prioritise the most effective redesign opportunities.

Frequently Asked Questions About Neuroinclusive Assessment in Higher Education

What is neuroinclusive assessment design?

Neuroinclusive assessment design is a systems-level approach to structuring assessment briefs, evaluation criteria, feedback practices, and adjustment pathways so that neurodivergent students can demonstrate their capability without bearing avoidable cognitive load from the assessment process itself. It focuses on reducing systems friction rather than adding individual accommodations after the fact.

Why do neurodivergent students have higher dropout rates in higher education?

Research consistently identifies institutional barriers, including unclear assessment briefs, inconsistent feedback, inflexible processes, and complex adjustment systems, as contributing factors. These barriers generate cumulative systems friction that erodes engagement, confidence, and capacity over time, independent of academic ability.

What is the Neuroinclusive Systems Index™ Rapid Diagnostic Audit?

The Neuroinclusive Systems Index™ Rapid Diagnostic Audit is a 12-item diagnostic tool developed under the Neuroinclusive Systems Framework™. It assesses three domains, brief clarity, cognitive load and accessibility, and feedback system reliability, and produces a mean friction score that categorises systems as high, moderate, or low friction. It is designed as a practical entry point for institutions wanting to identify where their assessment systems are generating avoidable barriers.

Where should an institution start when improving neuroinclusive assessment?

Brief clarity and feedback reliability produce the highest return on effort. Ensure every assessment brief states the purpose, task, format, weighting, and deliverable method on a single page. Standardise feedback using a keep / stop / start structure with at least one specific next step. These two changes address the entry and exit points of the assessment lifecycle and reduce friction across the board. For a structured diagnostic, book a Discovery Call with Neurodiverseology®.

Does neuroinclusive assessment design only help neurodivergent students?

No. Research consistently shows that reducing ambiguity, improving feedback consistency, and making expectations explicit improves outcomes for all students. Neurodivergent students encounter the friction first and most acutely, but the structural improvements benefit the entire student population.

Assessment Systems Do Not Need More Awareness. They Need Less Friction.

Higher education has spent over a decade building awareness of neurodiversity. Staff training programmes exist. Disability services have expanded. Inclusive language appears in strategy documents. Yet the dropout differentials persist, attainment gaps remain, and neurodivergent students continue to report that navigating the assessment system requires more cognitive effort than the academic work itself.

The reason is straightforward. Awareness does not redesign briefs. Training does not standardise feedback. Policy language does not publish adjustment menus. These are structural interventions that require systems-level change, and systems-level change starts with diagnosis.

The tools are available. The Neuroinclusive Systems Framework™ provides the analytical lens. The Neuroinclusive Assessment & Feedback Systems Framework™ provides the implementation model. The Neuroinclusive Systems Index™ – Rapid Diagnostic Audit provides the measurement instrument. What remains is institutional willingness to ask: where are our assessment systems generating friction, and what is the cost?

When institutions answer that question honestly, they do not just become more neuroinclusive in their assessment design. They build clearer, more consistent, and more effective systems that every student, and every staff member, benefits from. That is not an accommodation. That is better assessment design. And it starts with diagnosing the systems friction.

References

Clouder, L., Karakus, M., Cinotti, A., Ferreyra, M. V., Amador Fierros, G., & Rojo, P. (2020). Neurodiversity in higher education: A narrative synthesis. Higher Education, 80(4), 757–778. https://doi.org/10.1007/s10734-020-00513-6

Farrant, F., Owen, E., Hunkins-Beckford, F. L., & Jacksa, M. (2022). Celebrating neurodiversity in higher education. The British Psychological Society. https://www.bps.org.uk/psychologist/celebrating-neurodiversity-higher-education

Hamilton, L. G., & Petty, S. (2023). Compassionate pedagogy for neurodiversity in higher education: A conceptual analysis. Frontiers in Psychology, 14, Article 1093290. https://doi.org/10.3389/fpsyg.2023.1093290

Le Cunff, A.-L., Dommett, E. J., & Giampietro, V. (2024a). Neurodiversity and cognitive load in online learning: A focus group study. PLOS ONE, 19(4), Article e0301932. https://doi.org/10.1371/journal.pone.0301932

Le Cunff, A.-L., Dommett, E. J., & Giampietro, V. (2024b). Neurodiversity positively predicts perceived extraneous load in online learning: A quantitative research study. Education Sciences, 14(5), Article 516. https://doi.org/10.3390/educsci14050516

McDowall, A., & Kiseleva, M. (2024). A rapid review of supports for neurodivergent students in higher education: Implications for research and practice. Neurodiversity, 2. https://doi.org/10.1177/27546330241291769

North East Autism Society. (2025). Autistic students most likely to drop out of university: Investigation. https://www.ne-as.org.uk/everyday-equality/everyday-equality-education/autistic-students-most-likely-to-drop-out-of-university-investigation/